Google BigQuery

Google BigQuery is an enterprise data warehouse that allows you to store and query massive datasets at high speed using Google's infrastructure

Official Websitehttps://cloud.google.com/bigquery

TagsdatabaseSaas

JavaScript

Python

NodeJS packagehttps://www.npmjs.com/package/@google-cloud/bigquery

Version6.1.0

Pypi packagehttps://pypi.org/project/google-cloud-bigquery/

Version3.16.0

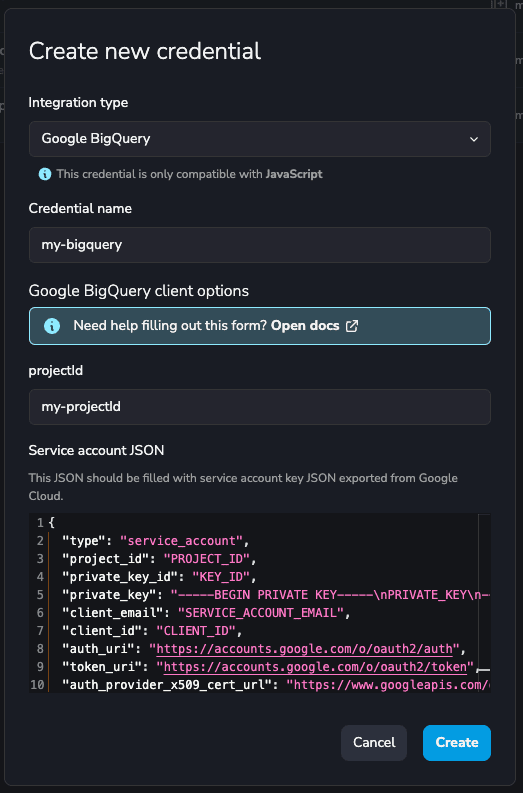

Credential configuration

The projectId corresponds to the project ID from the Google Developer's Console.

The credentials field of the JSON corresponds to a JSON key of the service account. This key

is downloaded as a JSON file when you create it. It should be something like this:

{

"type": "service_account",

"project_id": "PROJECT_ID",

"private_key_id": "KEY_ID",

"private_key": "-----BEGIN PRIVATE KEY-----\nPRIVATE_KEY\n-----END PRIVATE KEY-----\n",

"client_email": "SERVICE_ACCOUNT_EMAIL",

"client_id": "CLIENT_ID",

"auth_uri": "https://accounts.google.com/o/oauth2/auth",

"token_uri": "https://accounts.google.com/o/oauth2/token",

"auth_provider_x509_cert_url": "https://www.googleapis.com/oauth2/v1/certs",

"client_x509_cert_url": "https://www.googleapis.com/robot/v1/metadata/x509/SERVICE_ACCOUNT_EMAIL"

}

If you don't have one, create a service account and then, a JSON key for that service account.

info

Ensure to grant permissions over BigQuery to this service account.

Here is an example of a filled credential configuration form in YepCode:

Google BigQuery Snippets available in YepCode editor

note

The title is the triggering text for YepCode to autocomplete the script.

JavaScript

Python

Integration

New integration from credential

const googleBigQueryClient = yepcode.integration.googleBigQuery("credential-slug");

New integration from plain authentication data

const { BigQuery } = require("@google-cloud/bigquery");

const googleBigQueryCredentials = {

projectId: "YepCode",

credentials: {

type: "service_account",

project_id: "yepcode",

private_key_id: "XXXXX",

private_key: "-----BEGIN PRIVATE KEY-----\nx\n-----END PRIVATE KEY-----",

client_email: "yepcode@example.org",

client_id: "1234567890",

auth_uri: "https://example.org",

token_uri: "https://example.org",

auth_provider_x509_cert_url: "https://example.org",

client_x509_cert_url: "https://example.org",

}

};

const googleBigQueryClient = new BigQuery(googleBigQueryCredentials);

Create a Dataset

Create a dataset

// Specify the geographic location where the dataset should reside

const options = {

location: "dataset-location",

};

const [dataset] = await googleBigQueryClient.createDataset("dataset-id", options);

console.log(`Dataset ${dataset.id} created.`);

List Datasets

List datasets

// Lists all datasets in current project

const [datasets] = await googleBigQueryClient.getDatasets();

console.log('Datasets:');

datasets.forEach(dataset => console.log(dataset.id));

Delete a Dataset

Delete a dataset

// Create a reference to the existing dataset

const dataset = googleBigQueryClient.dataset("dataset-id");

await dataset.delete({force: true});

console.log(`Dataset ${dataset.id} deleted.`);

Query to a Dataset

Query to a dataset

const query = "your-SQL-query";

// For all options, see https://cloud.google.com/bigquery/docs/reference/rest/v2/jobs/query

const options = {

query: query,

// Location must match that of the dataset(s) referenced in the query.

location: "dataset-location",

};

// Run the query as a job

const [job] = await googleBigQueryClient.createQueryJob(options);

console.log(`Job ${job.id} started.`);

// Wait for the query to finish

const [rows] = await job.getQueryResults();

// Use the result

Integration

New integration from credential

big_query_client = yepcode.integration.google_big_query("credential-slug")

New integration from plain authentication data

from google.cloud.bigquery.client import Client

from google.oauth2.service_account import Credentials

project_id = "yepcode"

credentialsDict = {

"type": "service_account",

"project_id": "yepcode",

"private_key_id": "XXXXX",

"private_key": "-----BEGIN PRIVATE KEY-----\nx\n-----END PRIVATE KEY-----",

"client_email": "yepcode@example.org",

"client_id": "1234567890",

"auth_uri": "https://example.org",

"token_uri": "https://example.org",

"auth_provider_x509_cert_url": "https://example.org",

"client_x509_cert_url": "https://example.org",

}

credentials = Credentials.from_service_account_info(credentialsDict)

big_query_client = Client(project=project_id, credentials=credentials)

Create a Dataset

Create a dataset

from google.cloud import bigquery

dataset_name = "dataset-name"

dataset_id = f"{big_query_client.project}.{dataset_name}"

dataset = bigquery.Dataset(dataset_id)

dataset.location = "dataset-location"

dataset = big_query_client.create_dataset(dataset)

List Datasets

List datasets

datasets = list(big_query_client.list_datasets())

for dataset in datasets:

print(dataset.dataset_id)

Delete a Dataset

Delete a dataset

dataset_name = "dataset-name"

dataset_id = f"{big_query_client.project}.{dataset_name}"

big_query_client.delete_dataset(dataset_id, delete_contents=True, not_found_ok=True)

Query to a Dataset

Query to a dataset

dataset_id = "dataset-id"

table_id = "table-id"

query=f"SELECT * FROM `{big_query_client.project}.{dataset_id}.{table_id}`"

query_job = big_query_client.query(query, location="dataset-location")

rows = query_job.result()

for row in rows:

print(row)

Insert Data to a Table

Insert data to a table

rows_to_insert = [("Foo", 1), ("BAR", 2)]

table_ref = big_query_client.dataset("dataset-id").table("table-id")

table = big_query_client.get_table(table_ref)

errors = big_query_client.insert_rows(table, rows_to_insert)

if errors == []:

print("New rows have been added.")

else:

print("Encountered errors while inserting rows: {}".format(errors))